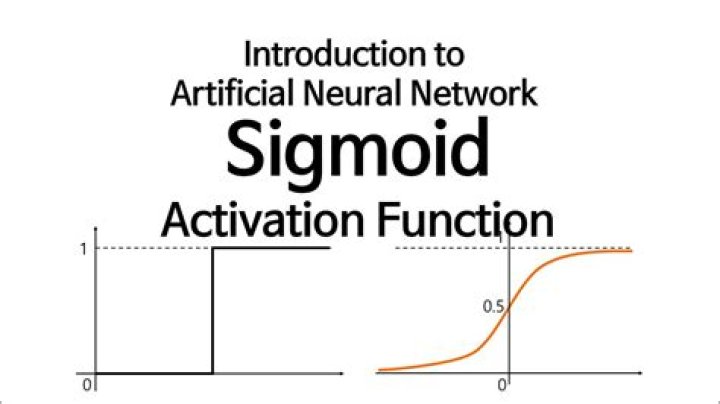

Why sigmoid function is used in neural network?

.

Accordingly, what is the use of sigmoid function in neural network?

Wikipedia has an article about the Sigmoid function. It is used in neural networks to give logistic neurons real-valued output that is a smooth and bounded function of their total input. It also has the added benefit of having nice derivatives which make learning the weights of a neural network easier.

Secondly, why do we use non linear activation function? To make the incoming data nonlinear, we use nonlinear mapping called activation function. Non-linearity is needed in activation functions because its aim in a neural network is to produce a nonlinear decision boundary via non-linear combinations of the weight and inputs.

Keeping this in view, why activation function is used in neural network?

The purpose of the activation function is to introduce non-linearity into the output of a neuron. We know, neural network has neurons that work in correspondence of weight, bias and their respective activation function.

What is a sigmoid layer?

A sigmoid function is a mathematical function having a characteristic "S"-shaped curve or sigmoid curve. A standard choice for a sigmoid function is the logistic function shown in the first figure and defined by the formula.

Related Question AnswersWhy is ReLU used?

The ReLU function is another non-linear activation function that has gained popularity in the deep learning domain. ReLU stands for Rectified Linear Unit. The main advantage of using the ReLU function over other activation functions is that it does not activate all the neurons at the same time.What is Softplus?

Softplus is an alternative of traditional functions because it is differentiable and its derivative is easy to demonstrate. Besides, it has a surprising derivative! Softplus function dance move (Imaginary) Softplus function: f(x) = ln(1+ex) And the function is illustarted below.Why backpropagation algorithm is required?

Essentially, backpropagation is an algorithm used to calculate derivatives quickly. Because backpropagation requires a known, desired output for each input value in order to calculate the loss function gradient, it is usually classified as a type of supervised machine learning.What does Softmax layer do?

A softmax layer, allows the neural network to run a multi-class function. In short, the neural network will now be able to determine the probability that the dog is in the image, as well as the probability that additional objects are included as well.How is ReLU nonlinear?

ReLU is not linear. The simple answer is that ReLU output is not a straight line, it bends at the x-axis. The more interesting point is what's the consequence of this non-linearity. In simple terms, linear functions allow you to dissect the feature plane using a straight line.What is the role of hidden layer?

The hidden layer is a layer which is hidden in between input and output layers since the output of one layer is the input of another layer. The hidden layers perform computations on the weighted inputs and produce net input which is then applied with activation functions to produce the actual output.What is the range of sigmoid function?

The logistic sigmoid function, a.k.a. the inverse logit function, is. g(x)=ex1+ex. Its outputs range from 0 to 1, and are often interpreted as probabilities (in, say, logistic regression).What is the difference between Softmax and sigmoid?

Getting to the point, the basic practical difference between Sigmoid and Softmax is that while both give output in [0,1] range, softmax ensures that the sum of outputs along channels (as per specified dimension) is 1 i.e., they are probabilities. Sigmoid just makes output between 0 to 1.Why do we use activation functions?

Why do we need Activation Functions? The purpose of an activation function is to add some kind of non-linear property to the function, which is a neural network. Without the activation functions, the neural network could perform only linear mappings from inputs x to the outputs y.What are the types of activation function?

Popular types of activation functions and when to use them- Binary Step Function. The first thing that comes to our mind when we have an activation function would be a threshold based classifier i.e. whether or not the neuron should be activated.

- Linear Function.

- Sigmoid.

- Tanh.

- ReLU.

- Leaky ReLU.