What is the letter for entropy

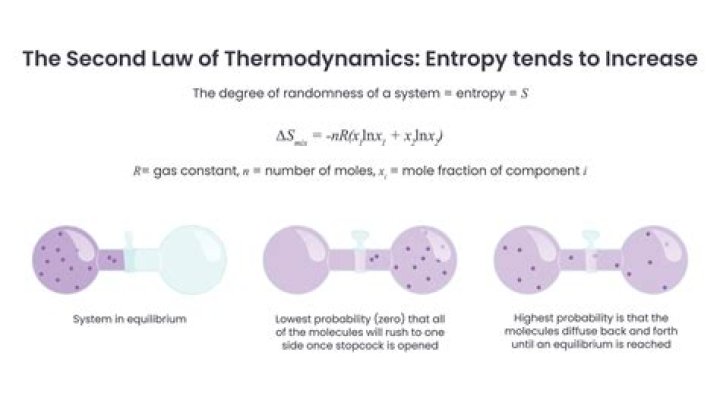

It is denoted by the letter S and has units of joules per kelvin. Entropy can have a positive or negative value. According to the second law of thermodynamics, the entropy of a system can only decrease if the entropy of another system increases.

Is entropy an H?

The concept of information entropy was introduced by Claude Shannon in his 1948 paper “A Mathematical Theory of Communication”, and is also referred to as Shannon entropy. As an example, consider a biased coin with probability p of landing on heads and probability 1 − p of landing on tails.

What does S stand for in entropy?

■ Entropy (S) is a thermodynamic state. function which can be described qualitatively as a measure of the amount of disorder present in a system. ■ From a chemical perspective, we. usually mean molecular disorder. Entropy and Disorder.

What is entropy and its symbol?

The symbol for entropy is an S. The symbol was decided upon by Rudolf Clausius, a German physicist, in the late 1800s.What is the unit of entropy S?

EntropyCommon symbolsSSI unitjoules per kelvin (J⋅K−1)In SI base unitskg⋅m2⋅s−2⋅K−1

Why is entropy measured in J K?

It determines that thermal energy always flows spontaneously from regions of higher temperature to regions of lower temperature, in the form of heat. … Thermodynamic entropy has the dimension of energy divided by temperature, which has a unit of joules per kelvin (J/K) in the International System of Units.

Can entropy be multiple?

Entropy is measured between 0 and 1. (Depending on the number of classes in your dataset, entropy can be greater than 1 but it means the same thing , a very high level of disorder.

What is entropy in simple terms?

The entropy of an object is a measure of the amount of energy which is unavailable to do work. Entropy is also a measure of the number of possible arrangements the atoms in a system can have. In this sense, entropy is a measure of uncertainty or randomness.What is entropy in chemistry class 11?

Entropy is a measure of randomness or disorder of the system. The greater the randomness, higher is the entropy. … Entropy change during a process is defined as the amount of heat ( q ) absorbed isothermally and reversibly divided by the absolute Temperature ( T ) at which the heat is absorbed.

Why is entropy Q T?The change in entropy (delta S) is equal to the heat transfer (delta Q) divided by the temperature (T). The second law states that there exists a useful state variable called entropy.

Article first time published onWhat is Delta E in thermodynamics?

ΔE is the change in internal energy of a system. ΔE = q + w (1st law of thermodynamics).

What happens when Delta's is zero?

delta S equals zero when the reaction is reversible because entropy is a state function. When the process is reversible, it starts and ends in the same place making entropy equal to zero.

What is G in thermodynamics?

Gibbs free energy, also known as the Gibbs function, Gibbs energy, or free enthalpy, is a quantity that is used to measure the maximum amount of work done in a thermodynamic system when the temperature and pressure are kept constant. Gibbs free energy is denoted by the symbol ‘G’.

How do I calculate entropy?

- Entropy is a measure of probability and the molecular disorder of a macroscopic system.

- If each configuration is equally probable, then the entropy is the natural logarithm of the number of configurations, multiplied by Boltzmann’s constant: S = kB ln W.

What is YES in unit of entropy?

The SI unit of Entropy is Joule per Kelvin (J/K).

What is the enthalpy of vaporization of water?

Water has a heat of vaporization value of 40.65 kJ/mol. A considerable amount of heat energy (586 calories) is required to accomplish this change in water. This process occurs on the surface of water.

Who discovered entropy?

The concept of entropy provides deep insight into the direction of spontaneous change for many everyday phenomena. Its introduction by the German physicist Rudolf Clausius in 1850 is a highlight of 19th-century physics.

Is entropy a chaos?

Entropy is not disorder or chaos or complexity or progress towards those states. Entropy is a metric, a measure of the number of different ways that a set of objects can be arranged.

Is entropy and enthalpy the same?

Difference Between Enthalpy and EntropyEnthalpy is a kind of energyEntropy is a propertyIt is the sum of internal energy and flows energyIt is the measurement of the randomness of moleculesIt is denoted by symbol HIt is denoted by symbol S

What are units for enthalpy?

The SI unit for specific enthalpy is joule per kilogram. It can be expressed in other specific quantities by h = u + pv, where u is the specific internal energy, p is the pressure, and v is specific volume, which is equal to 1ρ, where ρ is the density.

Which law is entropy?

The second law of thermodynamics states that the total entropy of a system either increases or remains constant in any spontaneous process; it never decreases.

Can entropy change be zero?

Therefore, the entropy change of a system is zero if the state of the system does not change during the process. For example entropy change of steady flow devices such as nozzles, compressors, turbines, pumps, and heat exchangers is zero during steady operation.

What is entropy class 12?

Entropy is defined as the measure of randomness or disorder of a thermodynamic system. It is a thermodynamic function represented by ‘S’. Certain characteristics of entropy are – Value of entropy is dependent upon the amount of substance present in the system.

What is entropy Mcq?

Entropy is a measure of randomness or disorder in the system. Entropy is a thermodynamic function and is denoted by S. The higher the entropy more the disorder in the isolated system. A change in entropy in a chemical reaction is related to the rearrangement of atoms from reactants to products.

What is entropy in data science?

Information Entropy or Shannon’s entropy quantifies the amount of uncertainty (or surprise) involved in the value of a random variable or the outcome of a random process. Its significance in the decision tree is that it allows us to estimate the impurity or heterogeneity of the target variable.

How do you explain entropy to a child?

The entropy of an object is a measure of the amount of energy which is unavailable to do work. Entropy is also a measure of the number of possible arrangements the atoms in a system can have. In this sense, entropy is a measure of uncertainty or randomness.

How does entropy apply to life?

Entropy is simply a measure of disorder and affects all aspects of our daily lives. In fact, you can think of it as nature’s tax. Left unchecked disorder increases over time. Energy disperses, and systems dissolve into chaos.

What is another word for entropy?

deteriorationbreakupdestructionworseninganergybound entropydisgregationfalling apart

Can you transfer heat from cold reservoir to hot reservoir?

The answer is a. Heat will never be transferred from a cold reservoir to a hot reservoir in nature.

Is Delta's temperature dependent?

The delta H and delta S aren’t temperature dependent because their values are calculated at standard condition; the equation for the K values at different temperatures do include temperature as a variable, so it is accounted for.

Why is entropy Q rev T?

with qirr being the inefficient, irreversible heat flow, and qrev being the efficient, reversible heat flow. By definition, entropy in the context of heat flow at a given temperature is: The extent to which the heat flow affects the number of microstates a system can access at that temperature.