What is airflow Airbnb?

.

Furthermore, what is airflow used for?

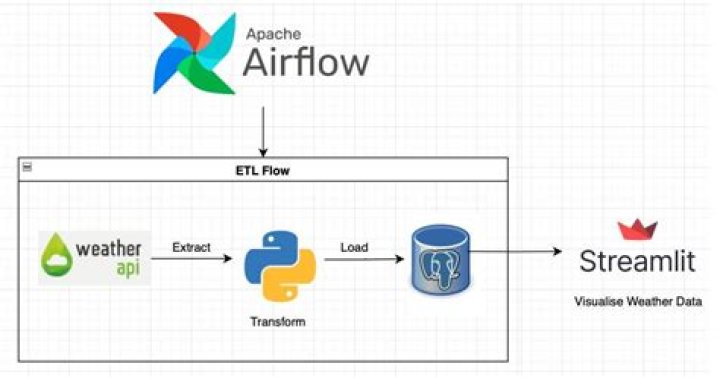

Apache Airflow is a workflow automation and scheduling system that can be used to author and manage data pipelines. Airflow uses workflows made of directed acyclic graphs (DAGs) of tasks.

Furthermore, how does airflow scheduler work? The Airflow scheduler monitors all tasks and all DAGs, and triggers the task instances whose dependencies have been met. The Airflow scheduler is designed to run as a persistent service in an Airflow production environment. To kick it off, all you need to do is execute airflow scheduler .

Subsequently, one may also ask, when should you not use airflow?

A sampling of examples that Airflow can not satisfy in a first-class way includes: DAGs which need to be run off-schedule or with no schedule at all. DAGs that run concurrently with the same start time. DAGs with complicated branching logic.

How do I check airflow status?

To check the health status of your Airflow instance, you can simply access the endpoint "/health" . It will return a JSON object in which a high-level glance is provided. The status of each component can be either “healthy” or “unhealthy”.

Related Question AnswersHow do you stop a DAG from air flow?

You can stop a dag (unmark as running) and clear the tasks states or even delete them in the UI. The actual running tasks in the executor won't stop, but might be killed if the executor realizes that it's not in the database anymore. " Simply set the task to failed state will stop the running task.How do you make an airflow Dag?

Create a DAG file Go to the folder that you've designated to be your AIRFLOW_HOME and find the DAGs folder located in subfolder dags/ (if you cannot find, check the setting dags_folder in $AIRFLOW_HOME/airflow. cfg ). Create a Python file with the name airflow_tutorial.py that will contain your DAG.How do you run DAGs in airflow?

Running your DAG When you reload the Airflow UI in your browser, you should see your hello_world DAG listed in Airflow UI. In order to start a DAG Run, first turn the workflow on (arrow 1), then click the Trigger Dag button (arrow 2) and finally, click on the Graph View (arrow 3) to see the progress of the run.What is a dag airflow?

DAGs. In Airflow, a DAG – or a Directed Acyclic Graph – is a collection of all the tasks you want to run, organized in a way that reflects their relationships and dependencies.What is a dag in airflow?

In Airflow, a DAG – or a Directed Acyclic Graph – is a collection of all the tasks you want to run, organized in a way that reflects their relationships and dependencies. DAGs are defined in standard Python files that are placed in Airflow's DAG_FOLDER .What is AirFlow treatment?

Airflow Polishing is a hygiene treatment that effectively removes stains on the front and back of teeth. The procedure works by using a fine jet of compressed air, water and fine powder particles to remove staining caused by coffee, tea, red wine, tobacco and some mouthwashes.What companies use airflow?

133 companies reportedly use Airflow in their tech stacks, including Airbnb, Slack, and 9GAG.- Airbnb.

- Slack.

- 9GAG.

- Square.

- Robinhood.

- Banksalad.

- Lime.

- Trustpilot.

What is AWS airflow?

Apache Airflow is an open-source platform to programmatically author, schedule, and monitor workflows that can be deployed in the cloud or on-premises. SageMaker joins other AWS services such as Amazon S3, Amazon EMR, AWS Batch, AWS Redshift, and many others as contributors to Airflow with different operators.What is a airflow?

Airflow, or air flow, is the movement of air from one area to another. Air behaves in a fluid manner, meaning particles naturally flow from areas of higher pressure to those where the pressure is lower. Atmospheric air pressure is directly related to altitude, temperature, and composition.What is an operator in airflow?

An operator in airflow is a dedicated task. They generally implement a single assignment and do not need to share resources with any other operators. We have to call them in correct certain order on the DAG. They generally run independently. An Airflow DAG consists of operators to implement tasks.How do you deploy airflow on Kubernetes?

Launching a test deployment- Step 1: Set your kubeconfig to point to a kubernetes cluster.

- Step 2: Clone the Airflow Repo: Run git clone to clone the official Airflow repo.

- Step 3: Run.

- Step 4: Log into your webserver.

- Step 5: Upload a test document.

- Step 6: Enjoy!

What is the DAG?

A DAG is a boundary for mailbox database replication, database and server switchovers and failovers, and an internal component called Active Manager. Active Manager, which runs on every Mailbox server, manages switchovers and failovers within DAGs.Who wrote airflow?

Apache Airflow| Original author(s) | Maxime Beauchemin |

|---|---|

| Operating system | Microsoft Windows, macOS, Linux |

| Available in | Python |

| Type | Workflow management platform |

| License | Apache License 2.0 |